Good Monday Morning

It’s June 12. The Fed wraps up its meetings on Wednesday and is widely expected to defer raising rates after ten straight increases.

Today’s Spotlight is 1,210 words–about 4 1/2 minutes to read.

3 Stories to Know

1. Federal deposit insurance does not cover money stored in non-bank apps like PayPal or Venmo, warns the Consumer Financial Protection Bureau. In the U.S., 85% of adults aged 18-29 use peer-to-peer payment apps.

2. A toner manufacturers’ trade associationhas complained to the Global Electronics Council about HP’s new policy that prohibits consumers from using third-party toner. Consumers who accept HP’s offer of 6 months free ink for a new printer also opt into a program that requires using only HP ink for the life of the printer.

3. Big Tech companies still block their software from labeling any image as a gorilla eight years after Google’s app mislabeled two Black people as gorillas. As part of its test, the New York Times used images of people, animals, and objects to test the software of Google, Apple, Amazon, and Microsoft. Gorilla identification was not done by any of the four companies, although Google and Apple were able to identify the other animals.

Spotlight on Political Deception Online

With generative AI, creating political deception online has become more accessible to millions of people who don’t need advanced equipment or training to do so.

Within hours of Gov. Ron DeSantis’ announcement to run for president, a PAC allied with him faced criticism for an ad featuring fake fighter jets flying over his speech. The DeSantis campaign also posted a video last week with doctored images depicting Donald Trump hugging Dr. Anthony Fauci three times.

Researchers have anticipated escalating political deception online for years. Even though doctored videos were used during the 2016 and 2020 presidential elections, the upcoming election represents the first time the electorate faces a threat when virtually anyone can create a look-alike video.

Reversing the Past

In previous elections, Facebook, YouTube, and other Big Tech firms attempted to police misinformation and disinformation. Both companies changed internal algorithms to reduce the amount of political content shown to users. For Facebook especially, its focus on private groups proved detrimental to that effort. Last year, Mark Zuckerberg ended the program after political content during the 2022 election was shown only half as much as during the 2020 election.

However, Facebook’s advertising algorithms still allowed researchers to successfully submit ads calling for violence against election workers. Facebook’s system allowed 15 of twenty ads to proceed despite YouTube and TikTok halting them.

YouTube has reversed itself as well. The company said that videos with false complaints about elections will remain visible on the site. Content that misrepresents voting logistics and eligibility will continue to be removed from YouTube.

Violent Language Online Increasing

According to authorities, extreme expressions of political opinion online have increased since last week’s indictment of Donald Trump by a federal grand jury. GOP members of Congress are contributing to the extremist talk, including Rep. Clay Higgins (R-LA) who tweeted military-style information to followers and Rep. Andy Biggs (R-AZ) who tweeted, “We have now reached a war phase. An eye for an eye.”

A doctored video sent by Donald Trump himself two days ago ratcheted up tensions yet again. The former president posted a video of himself hitting a golf ball mashed up with sound effects and a video of President Biden falling while riding a bicycle as if he had been struck by the ball. The video was reminiscent of Trump posting a picture of himself with an extended baseball bat next to an image of New York District Attorney Alvin Bragg, who had recently announced state charges against Trump.

Practical AI

Quotable: A UK firm found only half of adults could tell the difference between advertising emails written by generative AI or a human copywriter.

Noteworthy: An attorney who claimed to use ChatGPT for legal research is the latest person to run afoul of model glitches called “hallucinations.” In his research, the software invented previous cases, which the attorney then included in a federal court filing. The judge in that case has ordered a hearing to review possible sanctions.

In a completely unrelated case, a contributor to a publication covering guns asked ChatGPT for information about a radio host. ChatGPT erroneously posted that the radio host had been accused of misappropriating funds. The radio host is now suing ChatGPT developer OpenAI for libel.

Tool of the Week: Adobe’s Generative AI Fill extends images pretty easily. By now you’ve probably seen images of the Mona Lisa or other famous pictures with surrounding scenes. The Verge has some interactive samples so you can see for yourself what the fuss is about.

Waiting in the Wings

- How algorithms are automatically denying medical claims

- Amazon’s data about you expands beyond shopping

Put your email address in the form at this link and you’ll get a free copy of Spotlight each Monday morning to start your week in the know.

If you’re already a free subscriber, would you please forward this to a friend who could use a little Spotlight in their Monday mornings? It would really help us out.

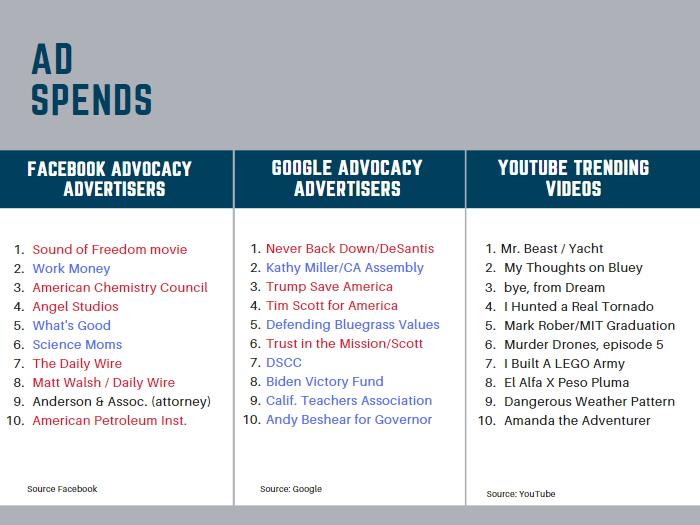

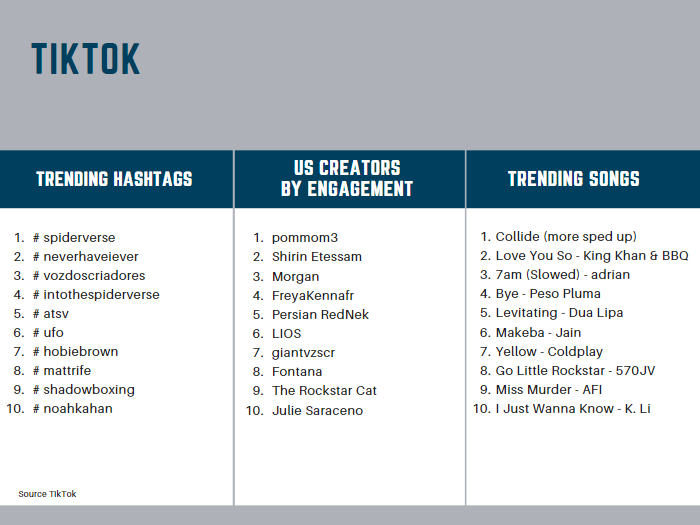

Trends, Spends & TikTok

Did That Really Happen? — Climate Change Conspiracy Theory Isn’t True

Podcaster Joe Rogan is being criticized again after a segment on his show included a nonsensical climate change theory that claims the Earth’s magnetic poles reverse themselves for 6 days every 6,500 years and then conveniently revert on the seventh day. That segment has now found its way into various viral spots, including TikTok.

Following Up — Airbnb Sues New York

We wrote extensively about Airbnb three months ago and suggested that more cities would be cracking down on the short-term rental platform. While that appears to be happening, Airbnb filed suit against New York City earlier this month for what it called “extreme” and “oppressive” rules that run counter to federal law.

Protip — Changing Android’s Keyboard Size

If you’re missing the correct keys on your Android phone’s screen more often than you would like, this ZD Net walkthrough on changing its size is just what you need.

Screening Room — Apple’s iPhone Privacy

Apple promotes the privacy settings of the iPhone’s Health app in this fun new spot.

Science Fiction World — Brain Implants

This technology is coming faster than perhaps anyone dared dream, but an experimental brain implant that boosts nervous system signals is working. The BBC has coverage of a 40-year-old man who is paralyzed, but can move his legs via the implant. It sounds farfetched, but the Lausanne University research has been published in Nature.

Coffee Break — Ride the Space Elevator

Climb in at ground level and ride this great Neal Agrawal interactive past birds, kites, and all the way up to space.