Good Monday Morning

It’s August 11th.

Vladimir Putin travels to Alaska on Friday to meet with President Trump and possibly Volodymyr Zelenskyy. More than a quarter-million troops from both sides and 13,000 Ukrainian civilians have been killed since Russia’s full-scale invasion over three years ago.

Today’s Spotlight is 1,064 words, about 4 minutes to read.

3 Headlines to Know Now: The Google Has a Bad Month Edition

St. Paul Hit by a Cyberattack

A late July cyberattack has kept city systems in St. Paul offline for over two weeks, with a 90-day state of emergency and the National Guard still on the job.

Instagram Map Sparks a Safety Panic

Instagram’s new map feature can share your last active location with selected followers. Meta says it is opt in only. Confusion and privacy fears have led bipartisan senators to call for it to be shut down.

Alexa+ Rolls Out With Smarts and a Price Tag

Alexa Plus is free for Amazon Prime members and about $20 per month for others. It offers more natural conversations and can manage tasks like calendars and smart home routines.

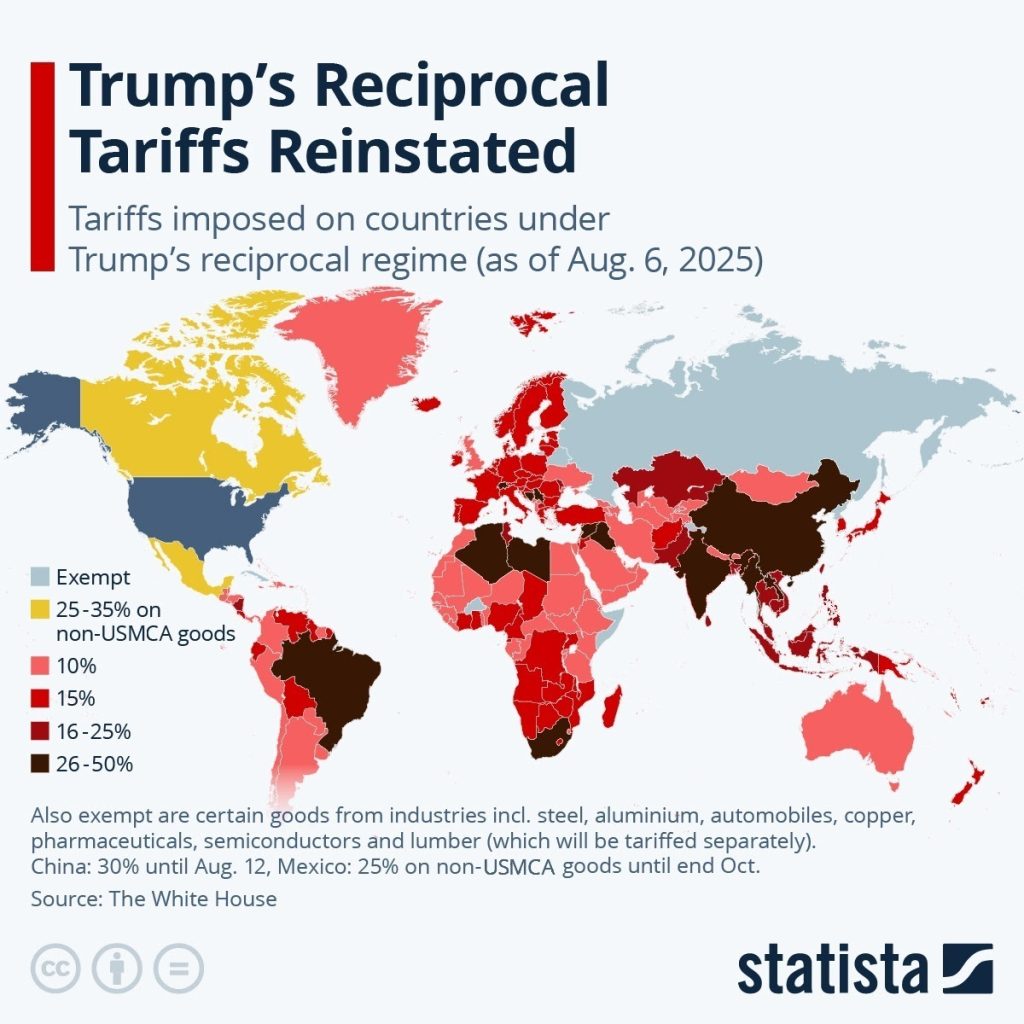

Trump’s Tariff Map Turns Red

George’s Data Take

Reminder: U.S. importers pay the tariffs up front, often passing the cost to consumers through higher prices. The auto industry is already that warning prices are headed up despite their covering previous tariffs. At 50% increases, Brazil sends the U.S. coffee, crude oil, soybeans, sugar, beef, and aircraft, while India’s biggest exports are electronics, pharmaceuticals, and gems.

Safes Cracked in Safes

Running Your Business

Hackers have cracked small personal-style electronic safes often found in homes, offices, and hotels in seconds by exploiting hidden locksmith backdoors and debug ports.

Silver Beacon Behind the Scenes

People who want to subvert a system always start by asking “How” and then move to “What if.”

That is exactly what happened to Securam ProLogic locks with a digital locksmith open feature. Hackers at a convention cracked that feature, then uncovered a second exploit. Planning for bad actors has to be part of your process from day one.

ChatGPT 5 is Coming, Your Mouse is Going

Chat GPT Takes The Lead

OpenAI introduced GPT 5 on August 7. It now runs as the default system for all ChatGPT users. GPT 5 uses internal routing to match a query with the right process. OpenAI founder Sam Altman describes it as a PhD level expert that is practical for daily use.

It’s not. At least not for most people.

But this model works faster than previous versions.

It answers with more accuracy and handles complex reasoning more effectively. It also shows gains in areas such as coding, science, and health topics. Analysts view this as an upgrade rather than a transformation.

Rival systems still win in some specialized tests. Anthropic’s Claude has long held the second spot, but this is now a three company race with Google’s Gemini gaining popularity through its search integration.

New Personalities Cause Tension

ChatGPT added four personality settings called Cynic, Robot, Listener, and Nerd. These adjust tone without changing the model.

Change still sparks outrage regardless of the system, however.

Many people disliked losing access to GPT 4o Users valued its emotional tone and memory of past interactions. The complaints pushed OpenAI to allow paying users to restore GPT 4o within one day of the launch. Many accepted that swap, trading faked emotions for more errors.

The shift to GPT 5 shows OpenAI’s new focus on simplicity. The company believes a single smart default will keep people engaged. The coding and data people seem happy with the changes. The backlash shows just how strongly users connect with the style of a model, and changing that connection without warning can damage trust.

Microsoft Looks to 2030

Microsoft is a big part of the ChatGPT picture, having invested nearly $14 billion in developer OpenAI. They’ve integrated the ChatGPT-powered Copilot throughout Windows, Office, and Bing.

Microsoft leaders now say they expect voice commands and gestures to replace most keyboard and mouse use by 2030. They see AI agents managing schedules and handling messages. The company is also working on security that can resist quantum attacks, which don’t exist yet, but whose concept worries the entire cybersecurity world.

The move to GPT 5 shows what the brain of future computing might look like. Microsoft’s plan for voice control shows what the body could become. The two trends together hint at a time when talking to a computer will be normal.

AI Joins the Research Game

Practical AI

New AI tools like Gemini Deep Search and ChatGPT Deep Research are handling complex questions with speed and citations, but traditional search is still essential for quick facts and breaking news.

Inside a Job Scam Operation

Protip

A Slate reporter answered a fake recruiter text and followed the scam through every twist. The scheme promised high pay for little work, funneled targets onto encrypted apps, and revealed how a low effort grift can still trap people from any background.

Drug-Price Cuts Over-Promised by 1,500 Percent

Debunking Junk

President Trump is telling audiences and social media followers that prescription drug prices have dropped by 1200 to 1500 percent. Experts say this is mathematically impossible and there is no evidence of dramatic cuts.

Columbia’s Very Funny Look at Nature

Screening Room

WhoFi Turns Your Wi-Fi into a Surveillance Scanner

Science Fiction World

Researchers in Rome created WhoFi, a system that uses AI to read WiFi signal distortions caused by your body to identify you with more than 95 percent accuracy even through walls and in the dark using ordinary routers.

Tiny Patch Matches Hospital BP Accuracy

Tech For Good

UC San Diego Researchers developed a soft skin patch the size of a stamp that uses ultrasound to track blood pressure inside the body with accuracy equal to invasive hospital methods.

The City That Says Stop

Coffee Break

A new Pudding.cool project maps every word seen on New York City streets in 18 years of Google Street View. The most common are stop, no, do not, only, and limit, turning the city into a giant poem of rules, although there are certainly more colorful ones.

Sign of the Times